KNIME: GPT3 Component

As many of you know, lately I’ve been focusing on the KNIME platform no-code / low-code to explore flexible solutions for both data processing (ETL) and natural language processing (NLP).

Using state-of-the-art language models like GPT-3 within visual tools allows non-technical profiles to leverage advanced AI capabilities without writing code. Along with my colleagues Alejandro Ruberte and Miguel Salmerón, we designed this component to integrate OpenAI directly into KNIME workflows.

A Brief Introduction to GPT3

GPT3 (Generative Pre-trained Transformer 3) is one of OpenAI’s most advanced models. Its architecture is specifically designed for text generation, allowing for almost any NLP task (classification, extraction, summary) using few-shots or zero-shot strategies.

Our idea was to create a generic and easy-to-use component to complete information in tables, leveraging these capabilities.

Use Case: Topic Modeling

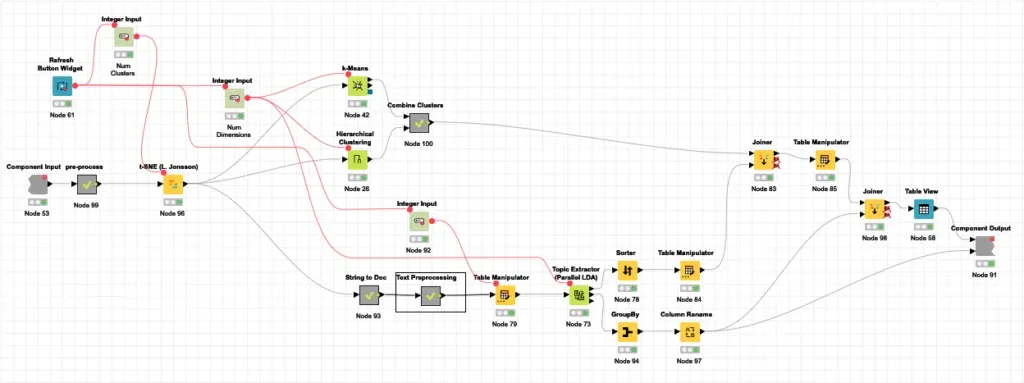

Imagine you need to analyze current literature on quantum computing. The usual process involves:

- Retrieval: Querying sources like Semantic Scholar (S2).

- Processing: Applying clustering techniques like K-Means or LDA (Latent Dirichlet Allocation).

- Description: This is where traditional Topic Modeling fails; it generates lists of keywords but not a natural description of the topic. This is where GPT3 comes to the rescue.

The Workflow in KNIME

The full workflow is divided into several modular stages:

- S2 API Search: Retrieves articles from Semantic Scholar using standard REST nodes.

- Clustering: Applies techniques based on embeddings (SPECTER) and parallel LDA.

- GPT3 Info Completion: The custom-designed component that generates topic descriptions.

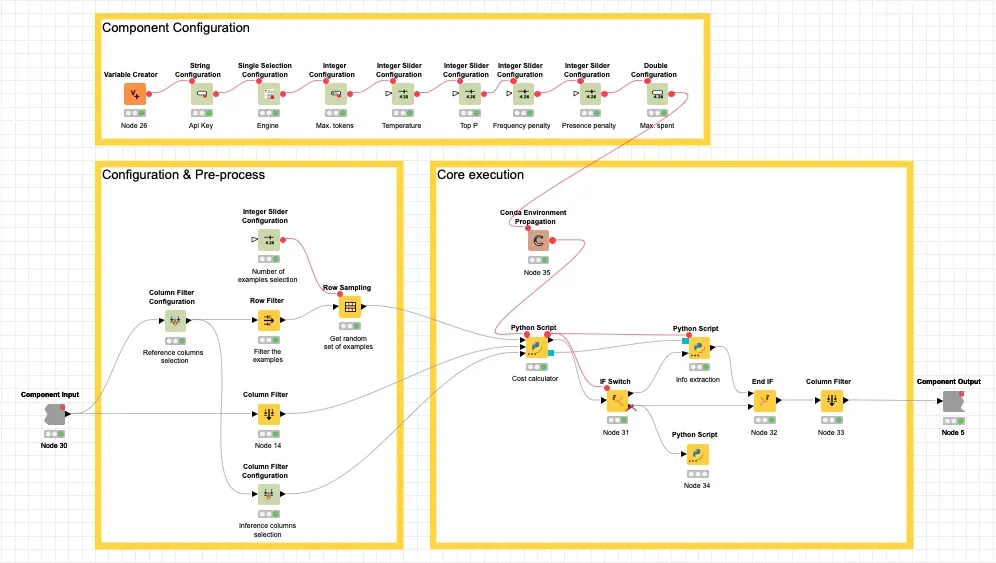

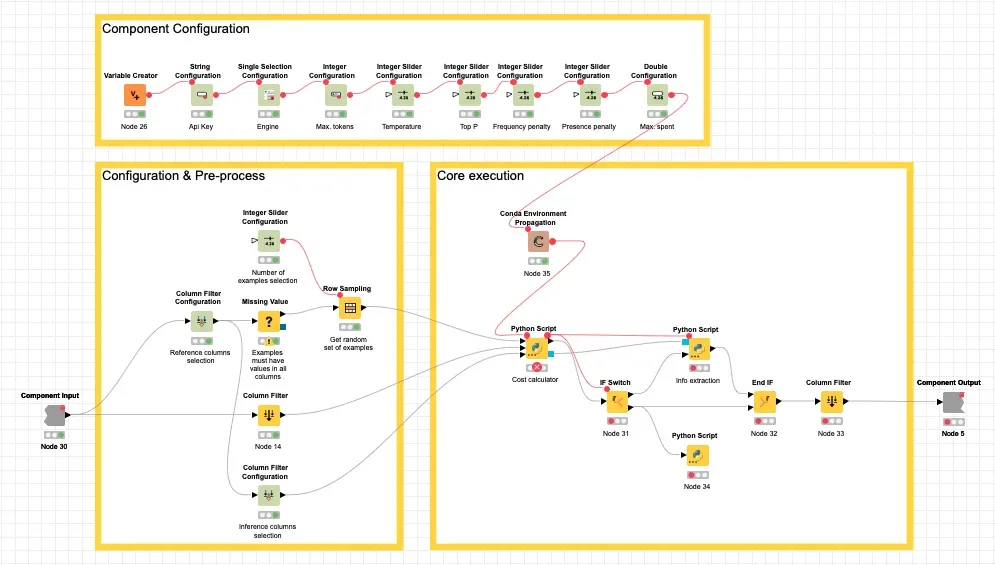

GPT3 Info Completion Component Details

The component encapsulates technical complexity and exposes a simple interface:

- Engine Configuration: Allows selecting the OpenAI model (Davinci, Curie, etc.).

- Cost Management: Includes a token calculator (using the GPT2 tokenizer) to estimate spending before executing the API call.

- Spending Limit: Allows setting a maximum budget to avoid surprises in your OpenAI bill.

- Few-shot Learning: You can include example rows in your table to guide the model on the desired output format and style.

Results

In our tests on quantum computing, the model was able to infer accurate descriptions for clusters generated by LDA:

As can be seen, starting from technical terms like “algorithm, code, security, cryptography”, GPT3 generated a coherent descriptive label such as “Quantum Security and Cryptography”.

Conclusions

This experiment demonstrates that mixing no-code tools (KNIME) with advanced AI capabilities (GPT3) allows you to:

- Automate complex analytical tasks in record time.

- Democratize access to AI for business profiles.

- Create reusable and scalable components within an organization.

You can download the full workflow from the KNIME Hub at this link.