Competitive AI Timeline 2025-2026: models, coding agents, and platforms

Between January 2025 and March 2026, the AI race stopped being a simple comparison of frontier models. Competition reorganized around full stacks: models, APIs, tool use, runtimes, observability, connectors, governance, computer use, and coding-agent products that could actually run in production.

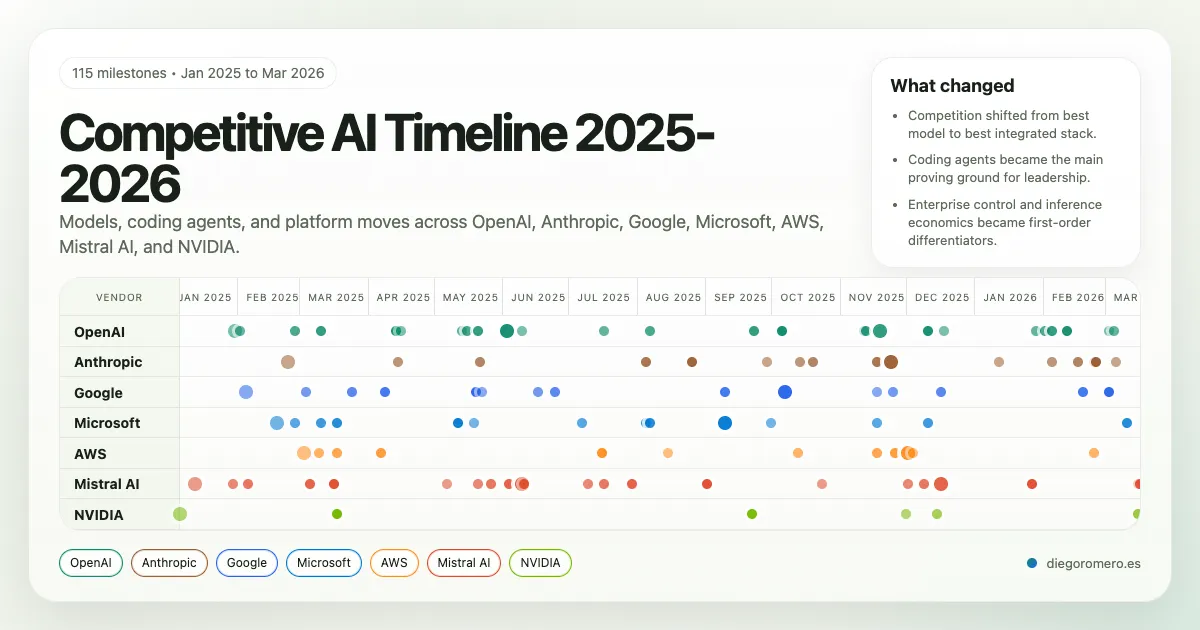

This piece turns the original chronology into a web-native article. Instead of a long table, the material is presented as an interactive horizontal timeline that lets you scan 115 milestones, open event-level context, and compare OpenAI, Anthropic, Google, Microsoft, AWS, Mistral AI, and NVIDIA in a single surface.

How to read this piece

- The top section contains the full interactive timeline.

- Each card shows date, surface, and model; the deeper strategic context lives inside the tooltip.

- The analytical layer remains below it: vendor matrix, cross-vendor patterns, and key inflection points.

What this chronology captures

The most important signal is not simply which company shipped the strongest model at a given moment. It is which company best combined model capability, developer interface, and deployment infrastructure for enterprise use. That is where the market structure changed: OpenAI and Anthropic pushing the coding-agent standard, Google leaning into open interoperability, Microsoft packaging enterprise control, AWS productizing agent operations, and NVIDIA shifting the conversation toward inference economics.

Vendor Comparison Matrix

This matrix uses the cleaned chronology as the base layer and compares each vendor across foundation models, agent ecosystem depth, enterprise positioning, and infrastructure or inference strength.

| Vendor | Foundation Models | Agent Ecosystem | Enterprise Position | Infrastructure and Inference |

|---|---|---|---|---|

| OpenAI | Strongest closed-model mix for reasoning, coding, and productized model tiers from o3/o4-mini to GPT-5. | Most complete developer-facing agent stack: Responses API, remote MCP, Codex, ChatGPT Agent, and computer-use surfaces. | Massive developer pull and direct product usage, with enterprise adoption often amplified by partners and hosted channels. | Less differentiated on raw infrastructure than hyperscalers, but very strong at turning model capability into usable software workflows. |

| Anthropic | Consistently strong coding and long-horizon agentic models, with Claude 3.7, Claude 4, Opus 4.1, and Sonnet 4.5. | Claude Code, Agent SDK, Research, Workspace integrations, and browser control build a coherent coding-agent product family. | Especially strong in regulated and engineering-heavy environments where reliability and safety posture matter. | Competes more through model quality, safety, and workflow depth than through standalone cloud infrastructure breadth. |

| Gemini 2.0 to 2.5 rebuilt Google’s frontier position with strong multimodal and coding performance. | ADK, Agent2Agent, Agent Engine, Gemini CLI, Code Assist agent mode, and computer-use tools emphasize open interoperability. | Vertex AI gives Google a credible enterprise control plane, especially for teams that want open standards and multimodal breadth. | Well positioned on managed runtime and multimodal APIs, though still less dominant than AWS and Microsoft in enterprise distribution. | |

| Microsoft | Wins less by proprietary base-model leadership than by hosting the right frontier models fast inside Foundry. | Agent Service, Agent Framework, Deep Research, unified APIs, MCP, A2A, and model routing make Foundry a broad enterprise agent factory. | Strongest enterprise-control story in the set: governance, private networking, reserved capacity, and integration into existing Microsoft estates. | Very strong at enterprise packaging and inference optionality through Azure, NVIDIA integrations, Fireworks, and OpenAI distribution. |

| AWS | Broader platform-first approach, strengthened later by Nova and Bedrock model-service improvements rather than by a single standout frontier release. | Bedrock Agents, multi-agent collaboration, AgentCore, Gateway, observability, and browser automation form a deep operations-first stack. | Excellent fit for enterprises prioritizing control, IAM-native governance, and production operations over pure model novelty. | Best productization of inference economics and runtime operations, with caching, tiers, guardrails, and agent operations as managed services. |

| Mistral AI | Combines open and efficient models with a rising coding family across Codestral, Devstral, Magistral, and enterprise-oriented Medium tiers. | Agents API, Mistral Code, Le Chat Enterprise, Deep Research, and MCP connectors turn Mistral into a credible full-stack challenger. | Strongest differentiation is sovereignty: hybrid deployment, private infrastructure, VPC/on-prem options, and regional control. | Not hyperscaler-scale, but increasingly differentiated through Mistral Compute and enterprise deployment control. |

| NVIDIA | Important at the model layer through Nemotron and blueprints, but strategic weight still comes mainly from infrastructure and economics. | AI-Q, NIM, AgentIQ, data platform pieces, and desktop AI systems support the agent ecosystem more than they define a single end-user assistant. | Critical supplier and ecosystem shaper across both model labs and enterprise AI deployments. | Sets the pace for inference economics and deployment architecture via Blackwell, Dynamo, DGX, and large-scale partnerships. |

Executive Summary

Five cross-vendor patterns and the announcements that most clearly changed the competitive shape of the market since January 2025.

Cross-Vendor Patterns

- Competition shifted from best standalone model to best integrated stack. The most important moves combined models, tool use, runtime, observability, governance, and developer workflow surfaces.

- Coding agents became the main proving ground for model leadership. OpenAI Codex, Claude Code, Gemini CLI, Mistral Code, and AWS/Microsoft agent tooling all pushed software engineering into the center of the market.

- Open interoperability became strategic. MCP and A2A moved from interesting standards to product-level differentiators across OpenAI, Google, Microsoft, AWS, Anthropic, and Mistral.

- Enterprise adoption depended as much on control as on intelligence. Private networking, IAM enforcement, governance, hybrid deployment, and observability repeatedly appeared in the most material launches.

- Inference economics and deployment shape became first-order competitive variables. NVIDIA’s stack, AWS serving features, Microsoft multi-provider inference, and Mistral sovereignty moves all point to a market where runtime efficiency matters almost as much as model quality.

Key Inflection Points

Anthropic turns Claude into a real coding-agent product

Claude 3.7 Sonnet plus Claude Code reframed Anthropic as a software-engineering platform, not just a model vendor.

OpenAI standardizes the modern agent stack

Responses API and the Agents SDK created a clear baseline for tool use, tracing, and multi-step agent products.

Google makes open multi-agent interoperability a platform bet

ADK, Agent2Agent, and Agent Engine upgrades positioned Google around openness and cross-system orchestration.

Codex turns ChatGPT into a delegation surface for software work

This was the clearest moment when cloud coding agents became a user-facing product category rather than an API building block.

Claude 4 combines frontier coding capability with visible safety escalation

Anthropic tied model leadership, Claude Code GA, and ASL-3 protections into one launch, raising the bar on both capability and posture.

AWS turns agent operations into managed cloud infrastructure

AgentCore, observability, and Nova Act made runtime operations and browser action part of a cohesive enterprise platform story.

GPT-5 resets the coding and reasoning frontier

OpenAI moved the market to a new performance and tiering baseline across ChatGPT and API consumption.

The useful conclusion from this window is that the market no longer rewards isolated intelligence alone. It rewards the ability to turn that intelligence into a practical operating layer for technical work, operations, and real software.